Introduction to Experiments

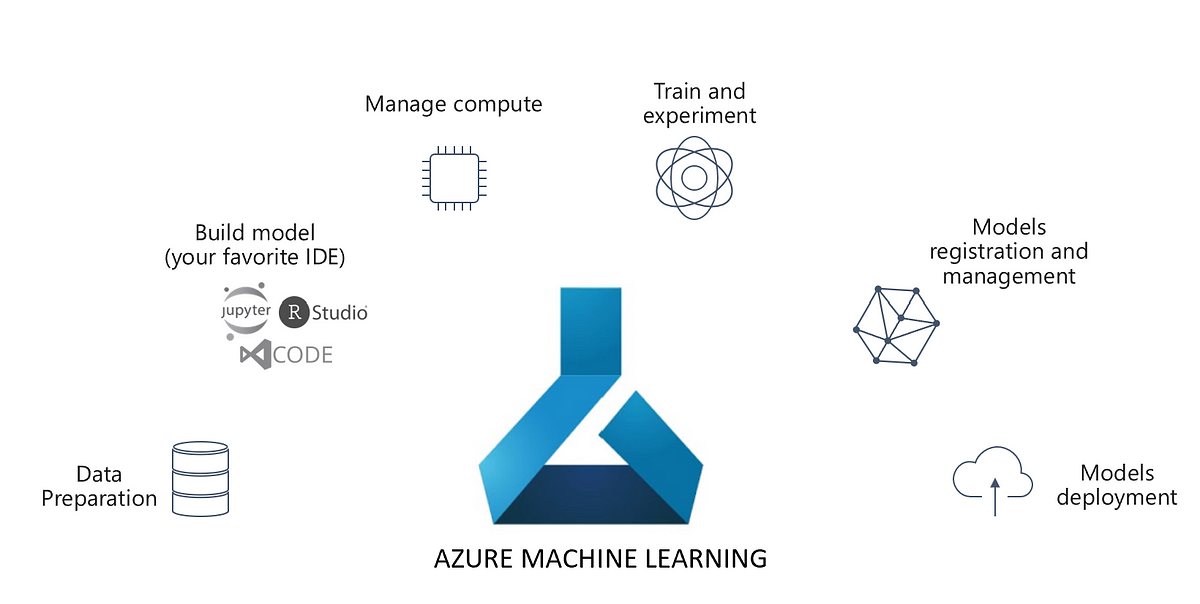

Continuous Experimentation is key to MLOps. Hence, tracking each iteration, artefact of an experiment is a key to success in Data Science. Hence, MLOps platforms/frameworks come with provisions to create experiments and track them. And, cloud technologies like Microsoft Azure Machine Learning are not behind.

Two libraries in Azure Machine Learning can perform Experiments viz. the Azure ML Core Experiment library and Databricks MLFlow. We will introduce both of them in this article. However, let’s start with Azure ML

Azure ML Core Experiment

An experiment is a named process in Azure Machine Learning that can be performed multiple times. Each iteration of the experiment is called a run, which is logged under the same experiment. In Azure ML, experiments are run in two ways viz. Inline and via ScriptRunConfig. There is also a special type of Experiment called Pipeline, to run Azure Machine Learning Pipelines. Let’s go through each of them.

Inline Experiment

As the name goes, Inline experiments are created and run within the script to be executed. Here is a sample code:

from azureml.core import Experiment # create an experiment variable experiment = Experiment(workspace = ws, name = "my-experiment") # start the experiment run = experiment.start_logging() # experiment code goes here # end the experiment run.complete()

These experiments are suitable to perform rapid exploration and prototyping. However, this code will run in the local Azure ML Compute instance. But, what if you want to run it on a different compute cluster? What if you wish to run this script with multiple parameters on different Compute Clusters? This code is neither scalable nor re-usable. ScriptRunConfig class addresses these challenges. To dive into a detailed example, refer to this article of ours: Explainable Machine Learning with Azure Machine Learning

Script Experiment

The ScriptRunConfig class takes many parameters. For more details, refer to this link. However, here are some key parameters:

- source_directory : Directory of the Script.

- script : The training and explanation script.

- compute_target : The compute target name.

- environment : The environment used.

Again, to dive into a detailed example, refer to this article of ours: Explainable Machine Learning with Azure Machine Learning

Pipeline Experiment

Machine Learning workflows do not run as a monolithic script. Multiple steps combine to become a pipeline. Hence, Azure Machine Learning comprises the Pipeline class, that can create a Pipeline Experiment. Here is a pseudo example.

First, you define the steps for example the data prep step and

from azureml.pipeline.steps import PythonScriptStep # Step to run a Python script step1 = PythonScriptStep(name = 'prepare data step', source_directory = 'script_dir', script_name = 'data_prep.py', compute_target = 'aml-compute-cluster') # Step to train a model step2 = PythonScriptStep(name = 'train model step', source_directory = 'script_dir', script_name = 'train_model.py', compute_target = 'aml-compute-cluster')

Furthermore, we combine these using the Pipeline object.

from azureml.pipeline.core import Pipeline from azureml.core import Experiment # Construct the pipeline train_pipeline = Pipeline(workspace = ws, steps = [step1,step2]) # Create an experiment and run the pipeline experiment = Experiment(workspace = ws, name = 'training-pipeline') pipeline_run = experiment.submit(train_pipeline)

Besides, for a more detailed example, read this article of ours: Azure Machine Learning Pipelines for Model Training.

MLFlow

Along with Experiment, Azure Machine Learning supports the MLFlow library. MLFlow is an open source tracking library by Databricks. This is useful when your organization is already using MLFlow. Moreover, Databricks integration with Azure Machine Learning becomes easy

Nonetheless, MLFlow runs in tandem with Azure ML Experiments. Hence, one can run it Inline or as a Script, similar to Azure ML Core Experiments. Here are the pseudo examples:

MLflow Inline

Here is an example of MLFlow inline. It is like core experiments, with MLFlow API

from azureml.core import Experiment import pandas as pd import mlflow # Set the MLflow tracking URI to the workspace mlflow.set_tracking_uri(ws.get_mlflow_tracking_uri()) # Create an Azure ML experiment in your workspace experiment = Experiment(workspace=ws, name='my-experiment') mlflow.set_experiment(experiment.name) # start the MLflow experiment with mlflow.start_run(): print("Starting experiment:", experiment.name) # load the data and count the rows data = pd.read_csv('data.csv') rowCount= (len(data)) # log the row count mlflow.log_metric('observations', rowCount)

MLflow as a Script

Furthermore, here is an example of MLFlow Experiment as a script. The first code block is the Experiment itself.

import pandas as pd import mlflow # start the MLflow experiment with mlflow.start_run(): print("Starting experiment:", experiment.name) # load the data and count the rows data = pd.read_csv('data.csv') rowCount= (len(data)) # log the row count mlflow.log_metric('observations', rowCount)

Besides, the ScriptRunConfig for the above experiment is:

from azureml.core import Experiment, ScriptRunConfig, Environment from azureml.core.conda_dependencies import CondaDependencies # Create a Python environment for the experiment mlflow_env = Environment("mlflow-env") # Ensure the required packages are installed packages = CondaDependencies.create(conda_packages=['pandas','pip'], pip_packages=['mlflow','azureml-mlflow']) mlflow_env.python.conda_dependencies = packages # Create a script config script_config = ScriptRunConfig(source_directory='my_dir', script='script.py', environment=mlflow_env) # submit the experiment experiment = Experiment(workspace=ws, name='mlflow-script') run = experiment.submit(config=script_config)

Conclusion

Hope this article is informative. All the explanations and code snippets are inspired from official Microsoft Documentation. However, we claim no guarantees regarding the completeness or accuracy of the content.