For a successful Machine Learning or Data Science practice, the following elements are key:

- Business Case

- Quality Data

- Skilled Teams

- Technology

- Risk Management

Business Case

No Data Science or Machine Learning project can take off without alignment with Business. The business owners must have an appetite to make Data-Driven Decisions. However, a counterargument may arise that how can they trust a model? And model is as good as the data it is trained upon. This brings us to the next element for a Data Science practice viz. Data Quality.

Quality Data

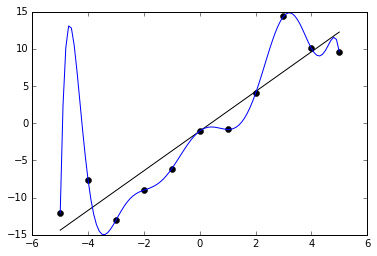

End of the day, Machine Learning is Garbage in, Garbage out. Hence, Data Scientists typically spend 80 percent of time preparing/cleansing the data. This makes ML model development and maintenance costly. However, organizations rarely spend time on improving the quality of data. Typical data quality issues are missing values, duplication, anomalous values etc. Improving data quality not only helps in better modeling but also in reducing operation overheads of cleansing pipelines. This calls for a strategic outlook to data quality from Data teams.

Skilled Teams

For a successful Data Science practice, right people with diverse skill sets should come together to form a cohesive unit. It includes Data Scientists, Analysts, Data Engineers, etc. However, organizations typically make the mistake of hiring Data Scientist(s) from a research background with little/no engineering experience, and expect them to swim through everything, from data gathering to model deployment. This leads to failed projects, dissatisfied teams. Read our article to know more about roles in ML/AI.

Technology

You may have all the business alignment, the right strategies, and the most skilled teams. But, without a solid technology backing them, an organization’s data science journey won’t go far. You can perform basic wrangling and modeling on a Data Scientists’ laptop. However, in order to train and serve in an automated, scalable fashion, an organization needs access to a robust technical infrastructure.

Risk Management

There is a lot of talk about Ethical AI and is essential, since AI can have legal consequences. This brings moral obligations to builders of AI products. Moreover, traditional security paradigms are applicable too. Hence, it is necessary to have security and responsibility ingrained into AI products by design. This calls for extensive collaboration with security and legal personnel.

Conclusion

This article is inspired from Harvard Business Review article. However, we have rewritten it from our experience. Thus, we claim no guarantees regarding its accuracy or completeness.