With the advent of Big Data, technology paradigms have shifted from relational databases to data lakes. Data comes in a wide variety, larger velocity and huge volumes. Hence, data manipulation methodologies have changed rapidly. This entails changes in the tech stack to achieve similar objectives. For instance, prior to big data, in the relational world, SQL was the only programming language to govern the data. However, with big data technologies, a variety of programming languages have emerged. Amongst them, Python is the foremost. In fact, it is becoming the defacto programming language of the Big Data and Data Science world.

Now, coming back to data manipulation, one of the tasks is data erasure. Owing to a variety of compliance and security requirements, we need to delete certain data at times. In the world of SQL, this can be achieved with a simple SQL stored procedure with a WHERE clause. However, with Big Data, we should not remain restricted to SQL, especially when we have a plethora of options.

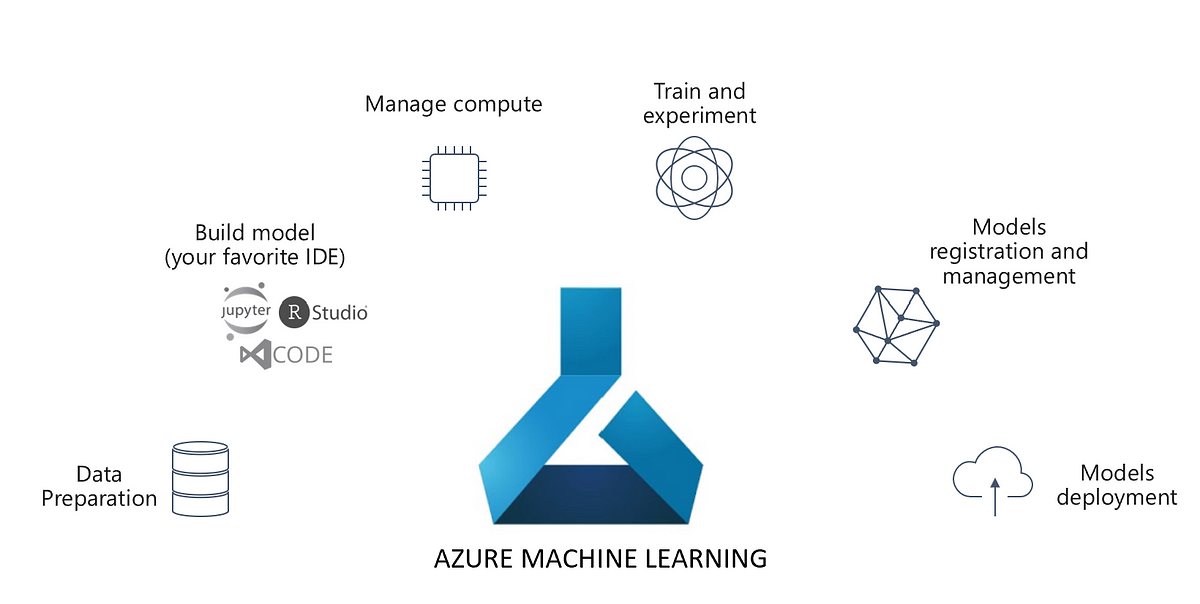

Coming to the big data world, we have multiple ecosystems. These options have exploded, especially with the emergence of cloud technologies. Amongst them, Azure Databricks is continuously growing. Databricks is built on top of Apache Spark. Hence, it is Spark with certain additions. Read more about it here:

Also Read: Why Databricks Is Gaining Popularity?

Azure Databricks File systems

Having said that, Databricks has contributed significantly on top of Apache Spark. This includes some really cool library and API for data manipulation and maintenance. For instance, they have added Databricks Koalas, which runs Pandas API on spark. This is a boon to Data Scientists and ML engineers who love Pandas. Nevertheless, it is an icing on the cake to have a file system API on spark for data manipulation. These APIs come along with dbutils module/package. For more on dbutils, refer to this link.

Also Read: Databricks Koalas: the bridge between pandas and spark

Within dbutils, we have the fs submodule which helps us with our objective. It is also called as DbfsUtils. In your Databricks notebook, type dbutils.fs.help() to know more about it.

Azure Data Lake data manipulation

Back to our scenario, how do we manage compliance requirements with Azure Databricks in Azure Data Lake? First things first, you need to be able to connect to Azure Data Lake using Azure Databricks, before manipulating the same. For details on how to connect to Azure Data Lake Gen2 using Azure Databricks, refer to this link:

Within dbutils.fs, use ls function. It takes the directory as an input parameter and returns the files contained in it in a list format.

filelist=dbutils.fs.ls(input_file_path)

The result will be a list of lists with path and the name of the file. Extract the file paths and names in separate lists for further convenience.

for i in range(len(filelist)):

if filelist[i].isDir()==True:

subdirectories.append(filelist[i].path)

else :

filepaths.append(filelist[i].path)

filenames.append(filelist[i].name)

Further, use these file paths and names in a loop to read and manipulate data in dataframes.

read_file = 'df_'+filename+'= spark.read.format("csv") \

.option("inferSchema", infer_schema) \

.option("header", first_row_is_header) \

.option("sep", delimiter) \

.load(filepath)'

exec(read_file)

#Remove the records

filtered_data = 'df_'+filename+'_filtered=df_'+filename+'[~df_'+filename+'.ID.isin(('+str(list_of_ids)+'))]'

exec(filtered_data)

#Output Files

output_folder_path = filepath.replace(".tsv", "")

output_file_path =filepath

#Write to output folder

overwrite_file = 'df_'+filename+'_filtered.repartition(1).write.mode("overwrite") \

.format("com.databricks.spark.csv") \

.option("ignoreTrailingWhiteSpace", ignore_trailing_white_space) \

.option("ignoreLeadingWhiteSpace", ignore_leading_white_space) \

.option("header", first_row_is_header) \

.option("sep", delimiter) \

.csv(output_folder_path )'

Conclusion

The above code is simply boilerplate stuff for reference. Actual implementations may vary. Furthermore, this is an article for information purposes. We do not make any guarantees whatsoever regarding the completeness and accuracy.