Last month Microsoft announced that Data Factory is now a ‘Trusted Service’ in Azure Storage and Azure Key Vault firewall. Accordingly, Data Factory can leverage Managed Identity authentication to access Azure Storage services like Azure blob store or Azure Data lake gen2. Please note that this feature is not available with ADF Data Flows. Before delving into its impact, let us delve deeper into the different authentication mechanisms through which Azure Data Factory can access Azure storage. These mechanisms are Account Key, Service Principal and Managed Identity.

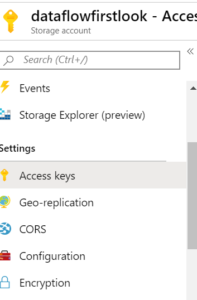

Account Key

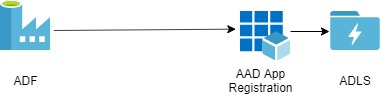

Service Principal

The second way to authenticate ADF with the storage account is the service principal authentication. In this approach, we use an Azure Active Directory application. This application acts as a handshaking element between the ADF and Azure Storage/Azure Data Lake.

The below steps will explain the service principle approach. Moreover, this Microsoft doc provides sufficient details to get started.

Step 1:Create App registration

We assume that you have Azure storage and Azure Data Factory up and running. If you haven’t done so, go through these documents: Quickstart: Create a data factory by using the Azure Data Factory UI and Create an Azure Data Lake Storage Gen2 storage account.

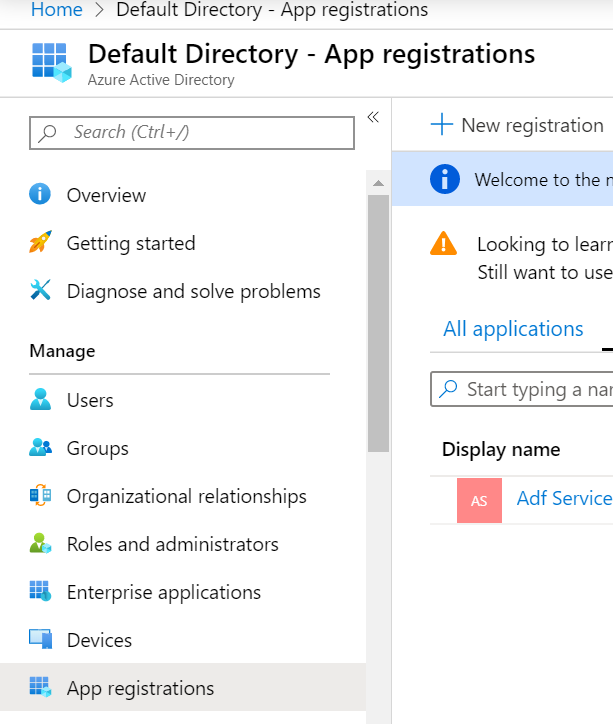

In order to create an AAD application, go to the left-hand resources pane in the Azure portal and click on Azure Active Directory.

Click on App registrations in Azure Active Directory and create a new app.

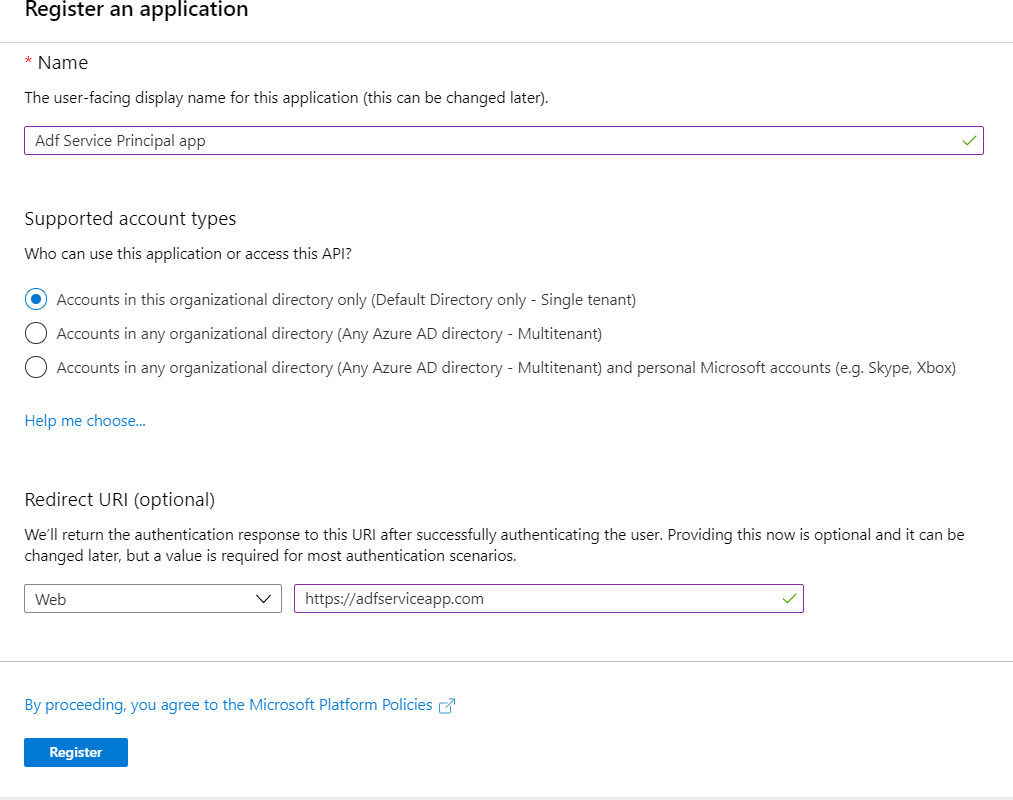

Assign a name and URL to your app as shown below:

Click on register and your app is ready.

Step 2: Permit App to access ADL

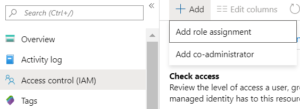

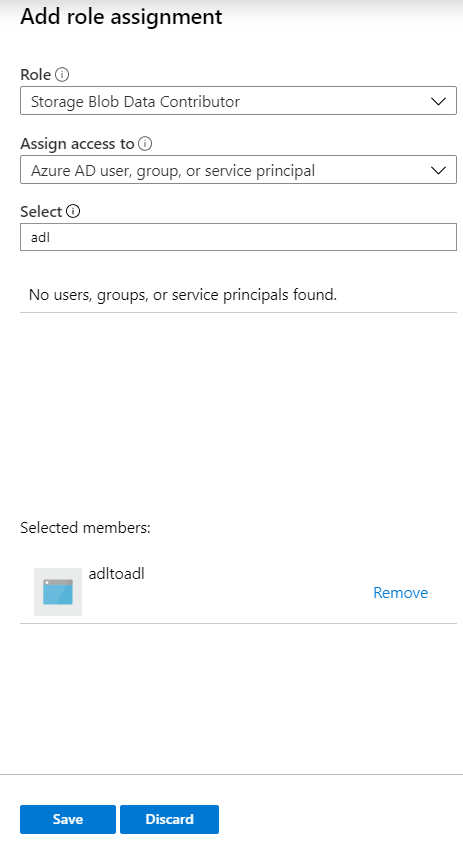

Once you are done with the app creation, it needs to be granted access to your storage account. To achieve the same, open the storage account you have created and go to access control. Click on Add and select ‘Add role assignment’.

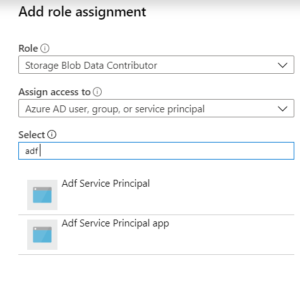

This opens a pane on the right-hand side of the portal. Select the role as ‘Storage Blob Data Contributor’ and select your app to be added.

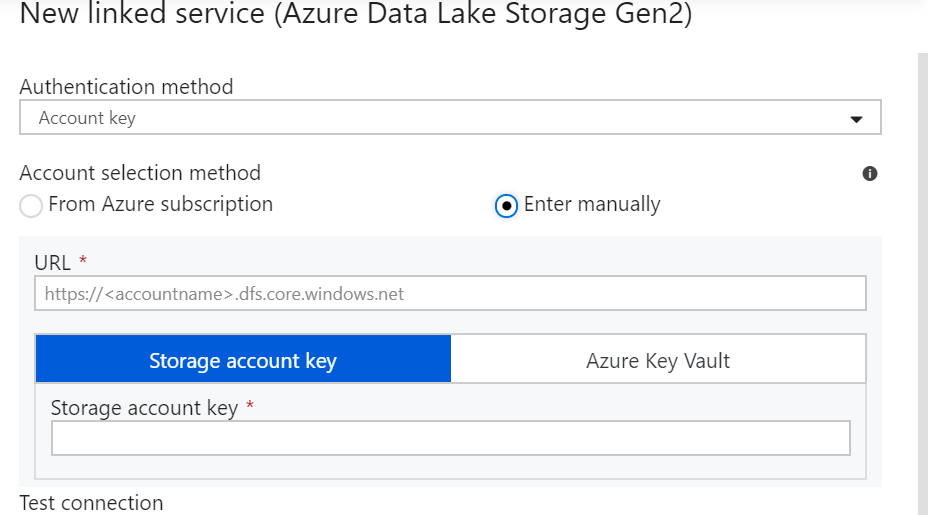

Step 3: Authenticate using Service Principal

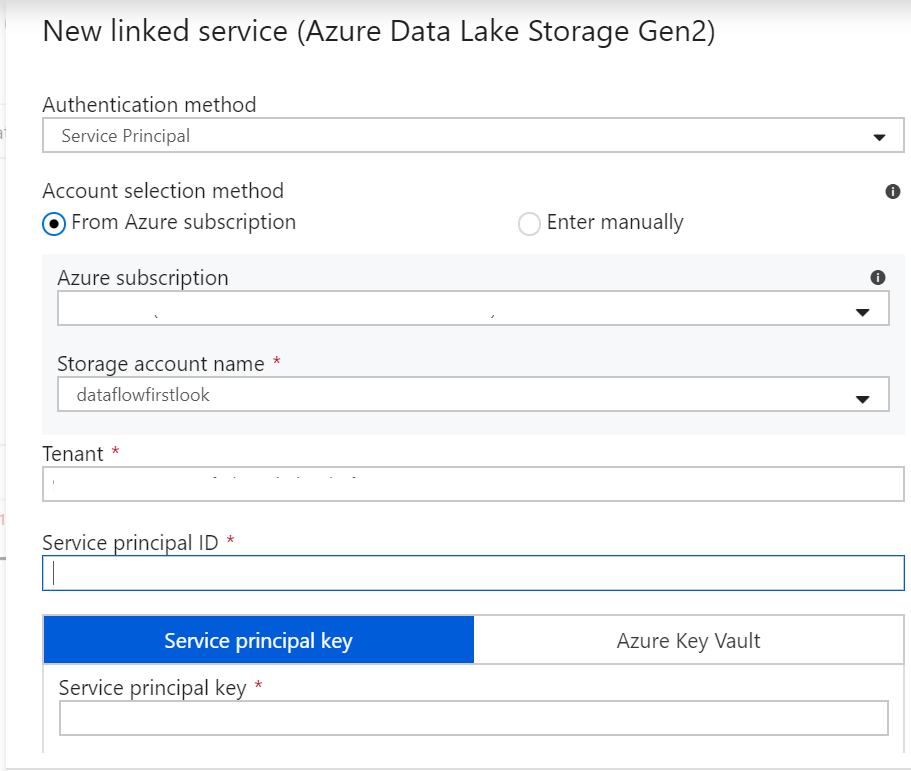

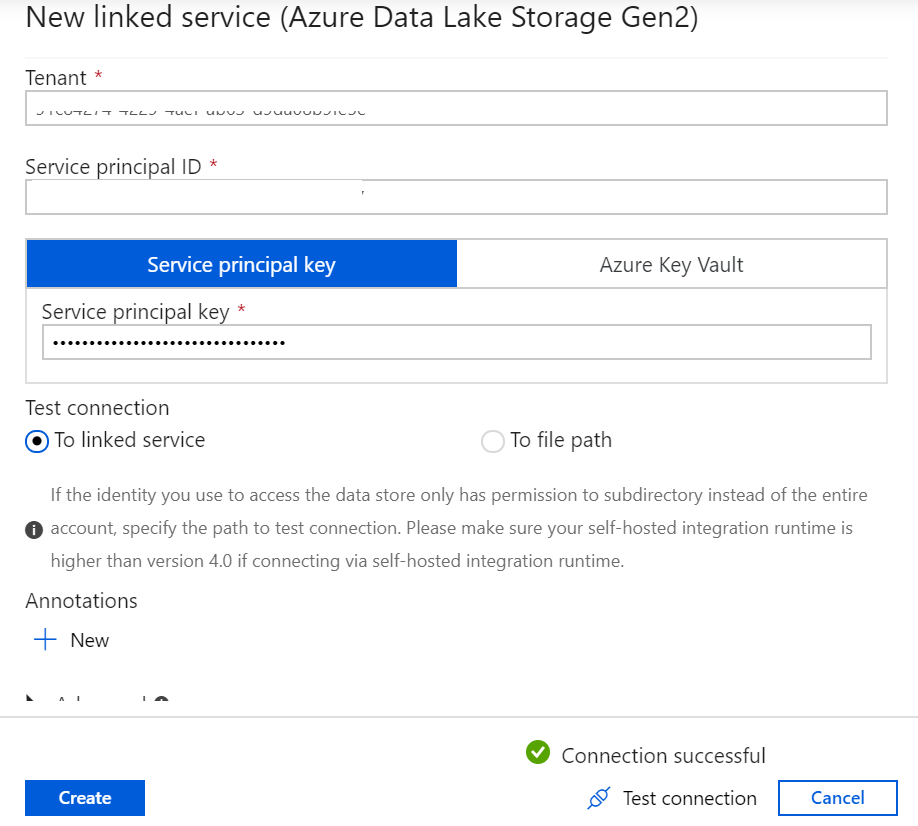

Lastly, we need to connect to the storage account in Azure Data Factory. Go to your Azure Data Factory source connector and select ‘Service Principal’ as shown below.

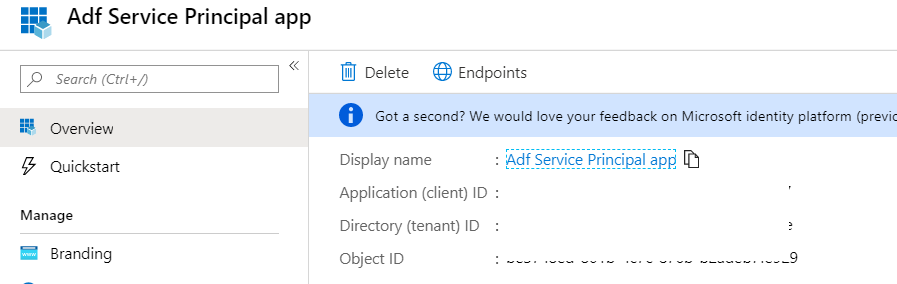

Select your Azure Subscription and Storage account name. Now as far as the remaining details are concerned viz. Tenant, Service principal ID and Service principal key, go to the Overview section of the App you created. The Directory ID is Tenant while the Application ID is Service principal ID.

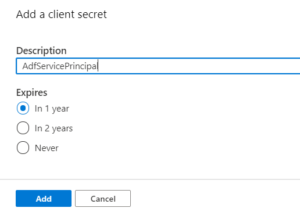

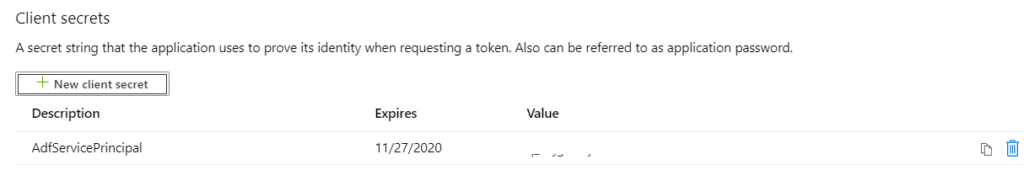

Furthermore, to retrieve the Service principal key, go to Certificates and secrets and create a New client secret.

Copy the secret immediately and save it in a secure location (preferably key-vault). Use this copied key as the Service principal key.

Putting all the bricks in place, we can authenticate the ADF to access the Azure Data Lake gen2/Azure Storage.

We can see that in the service principal, we have an additional detail apart from the storage account name and a client secret (Service principal key) viz. the Service principal ID which is the Application ID of the AAD app. The AAD app acts as another layer of security to the system. However, it is still vulnerable to breaches from outside the organization. This risk can be mitigated using the new feature in ADF, i.e. Managed Identity authentication to Azure Storage.

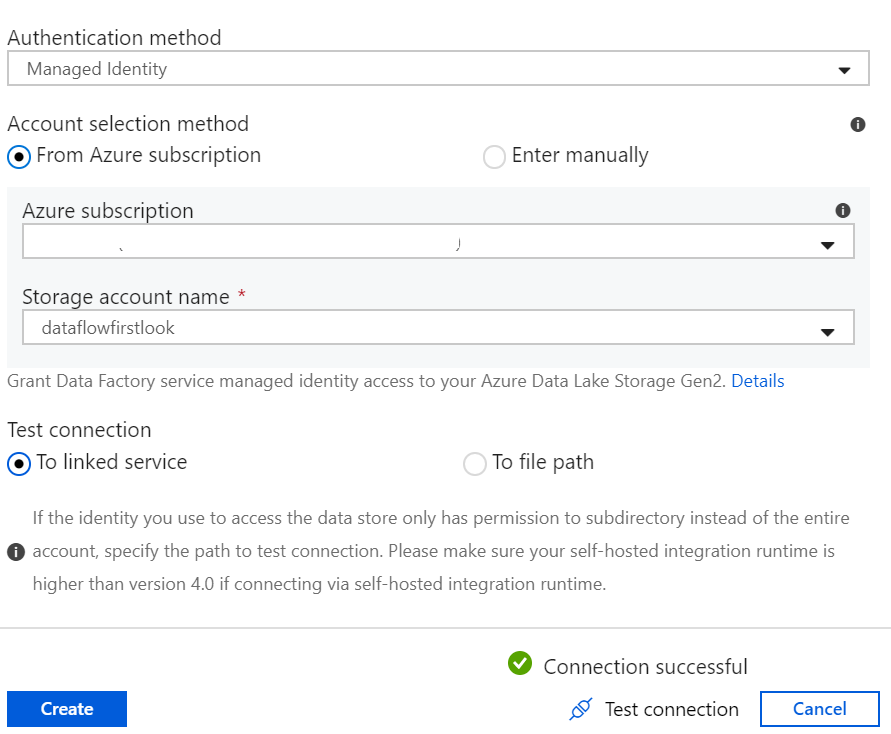

Managed Identity

In Managed Identity, we have a service principal built-in. To elaborate on this point, Managed Identity creates an enterprise application for a data factory under the hood. This application is like the AAD app which we created earlier, except that it does not allow the provision to create secrets(intuitive!)

Let us now add the Azure Data Factory as an app to the access control of the Storage Account. The name of our ADF is ‘adltoadl’.

Go to the access control panel and add a new role, as shown below.

Now, going back to ADF, use Managed Identity and connect to the same storage.

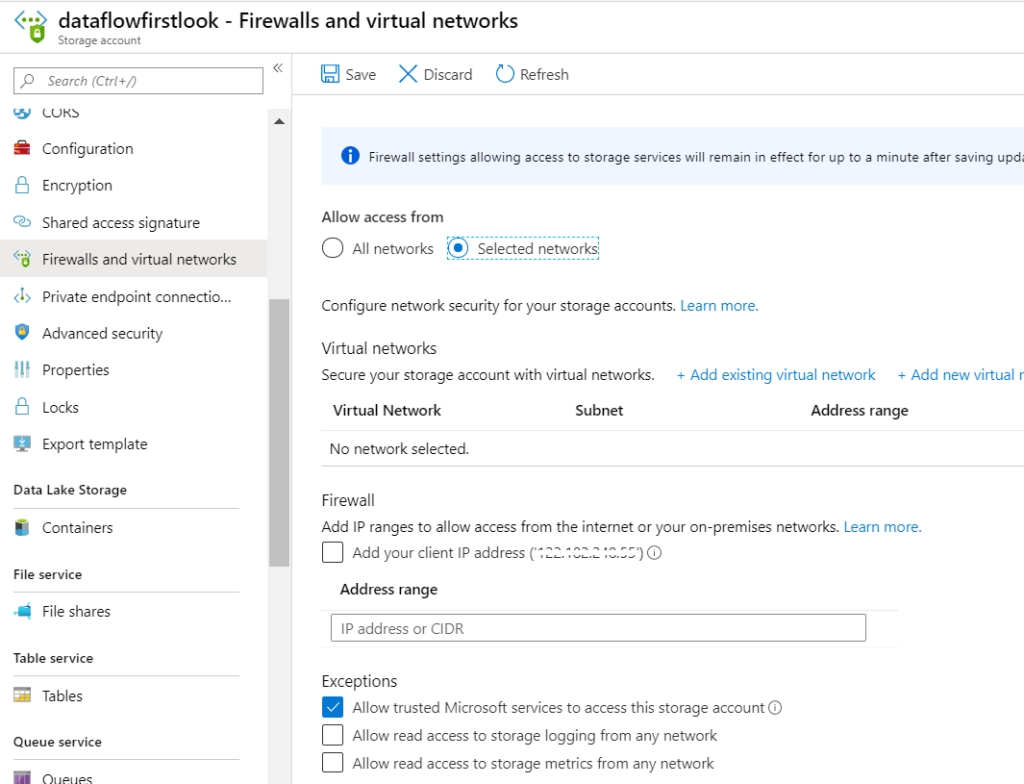

As a prerequisite to this, please go to the Firewall and virtual networks in your storage account and check the first exception as shown below.

As far as the advantages of Managed Identity is concerned, there is no way for someone outside the organization to access your storage through the Azure Data Factory.

Conclusion

Hope you liked this article. Please note that this article is only for information.

when is SAS recommended

When you want to share some specific data with external users for a limited amount of time