In our article on Causal Inference in Observational Studies, we studied the motivation for the same using the example of Simpson’s Paradox. It highlights the importance of observational studies and motivates the Inference aspect of Causality.

Before that, we discussed the motivation for Causal AI in the article Mind over Data – Towards Causality in AI. In that article, we read about the three ladders of Causation viz. Association, Interventions, and Counterfactuals. We had an intuition as to why current AI algorithms lack Causal Understanding. This was a motivation for Algorithmic Causality.

Having said that, a newbie like me could be lost in a laundry list of tools, techniques, and math. It’s helpful if one gets to understand the broad swimlanes in which Causal Research is carried out. Hence, I decided to write about the three major swimlanes in which the research and industrial practice happen in Causality. Below are the three major swimlanes.

Causal Inference

This is the oldest and perhaps the most aligned with traditional statistics amongst swimlanes of Causality. It comprises inferring the Cause Effect Relationships between variables from Observational Data. It is somewhat similar to Inferential Statistics in principle. For instance, you have a hypothesis about the Causal Relationship between smoking and lung cancer. You create a Causal Model and test it against the data.

There are two major frameworks in Causal Inference viz. Potential Outcomes Framework and Structural Causal Models. The potential outcomes framework is a school of causality to infer the treatment effect of binary variables. It relies on a Statistical estimand to calculate a Causal quantity.

Another school of thought is the Structural Causal Models paradigm which is perhaps, the most comprehensive framework in Causal Inferencing. It uses graphical models (Directed Acyclic Graphs, also called DAGs) to model the data-generating process, which is then tested against the available data. Moreover, it provides us with tools to model interventions, which is crucial to decision-making.

Causal Discovery

In an ideal world, we may have small datasets and domain experts to help us build causal models and test them against data. However, it’s far from reality. Domain knowledge is scarce and datasets are huge. This necessitates the role of Causal Discovery. Causal Discovery or structural identification is a subfield of Causality that aims to construct DAGs from Observational Data. In other words, it aims to infer Causal Structure from Data.

To be honest, this is not so straightforward and there are no standard ways since many Causal Structures could be derived from the same data. However, there are a few algorithms that may help. By no means, they are exhaustive.

- Conditional Independence Testing: The key idea here is that two independent variables are not causally linked. This aids the elimination of edges in an undirected graph.

- Greedy search of Graphs: An example is the Greedy Equivalence Search algorithm. It starts with an empty graph and iteratively adds edges to maximize a score(e.g. Bayesian Information Criterion).

- Exploiting Asymmetries: The fact that Causal relations are asymmetric could be leveraged to find Causal Model candidates.

We hope to cover these in a future article.

Causal AI/ML

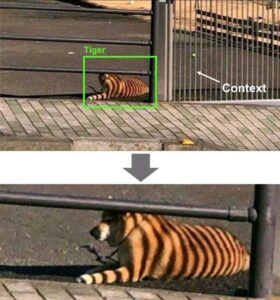

There was a hilarious meme doing rounds on the internet saying that data without context is noise.

The context in ML/DL algorithms would be Causality. If the above Computer Vision could decipher that the bars cause the stripes, it would not have made such a blunder. This is the motivation for Causal AI. If the algorithm could think counterfactually, the result would have been different.

Causal AI is AI that can reason causally, perform interventions and analyze counterfactuals. However, it is easier said than done. But, techniques like Causal Markov Kernels exist. The author is still exploring this space and will keep the reader updated on the same.

Conclusion

While the idea of Causality is not new, the field has gained momentum off late, thanks to pioneers like Dr Judea Pearl. Having said that, this article is based on the author’s knowledge till date. Each of these fields are evolving rapidly. We hope that the readers find inspiration and blaze their own trails. Finally, this article is for information only. We do not claim any guarantees regarding it’s accuracy or completeness.

References: Causal Discovery